Présentation

Le LIGM est un laboratoire informatique, localisé sur le campus de la cité Descartes à Champs s/ Marne, dans 3 bâtiments (ESIEE, Bâtiment Copernic et Bâtiment Coriolis).

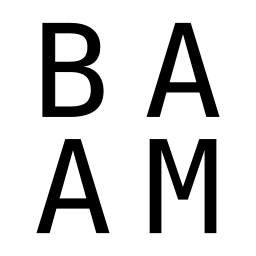

Il existe 6 équipes de recherche, pour un total de 80 chercheurs permanents et 50 non permanents. Ces équipes sont A3SI (Algorithmes, architectures, analyse et synthèse d’images), ADA (Algorithmique discrète et applications), BAAM (Base de données, automate, analyse d’agorithmes et modèles), COMBI (Combinatoire algébrique et calcul symbolique), LRT (Logiciels, réseaux et temps réel), et SIGNAL (Signal et communication).

Les membres du LIGM s’impliquent dans les enseignements d’informatique de l’Institut Gaspard-Monge, de l’IUT de Marne-la-Vallée, d’ESIEE Paris et de l’Ecole des Ponts ParisTech.